Mistral's Devstral 2: The Return of Sovereign Code AI

- Bastien

- 17 Dec, 2025

The European Counter-Strike in Code AI

With the release of Devstral 2 and its lightweight counterpart Devstral Small 2, Mistral AI is effectively reclaiming territory in a sector recently dominated by Chinese laboratories (such as DeepSeek or Qwen). These models represent a significant maturity milestone for the French unicorn, moving beyond general-purpose chat to specialized, high-performance coding agents. The promise is bold: delivering GPT-4 level coding proficiency directly on a developer’s laptop, without sending proprietary code to the cloud. The “Mistral 2” Ecosystem: Efficiency Over Size

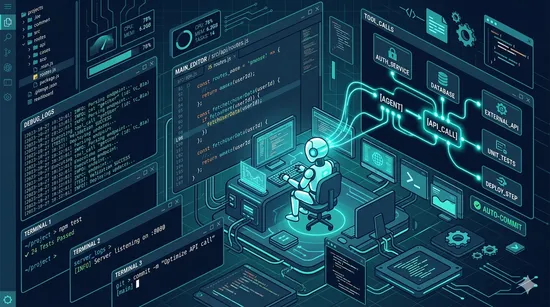

Devstral is not an isolated release; it is the specialized edge of the broader Mistral 2 suite. Research indicates that this second generation of models doubles down on the “Mixture of Experts” (MoE) architecture, optimizing inference speed while maintaining vast context windows (up to 128k tokens). While Mistral Large 2 handles complex reasoning and multi-step logic in the cloud, Devstral Small 2 is engineered for low-latency autocompletion and local refactoring, fitting comfortably within the VRAM constraints of standard consumer GPUs (like the RTX 40 series or Apple Silicon M-chips). Local vs. Cloud: The Privacy Pivot

For enterprise developers, the allure of Devstral 2 isn’t just performance—it’s governance. Cloud-based assistants like Copilot have faced scrutiny regarding data leakage and IP retention. By offering a model capable enough to run on-device, Mistral allows companies to keep their entire codebase behind their firewall. Benchmarks suggest that Devstral Small 2 achieves a “pass@1” rate on Python and JavaScript tasks that rivals much larger, server-hosted models, making the trade-off between privacy and intelligence negligible for the first time.

A Viable Alternative to the US/China Duopoly?

The dominance of US proprietary models and Chinese open-weights models has created a “sovereignty gap” for European tech. Devstral 2 fills this void. Unlike the restrictive licensing of some competitors, Mistral’s commitment to open weights (Apache 2.0 or similar permissive licenses for the smaller models) empowers the community to fine-tune these models for niche languages (like Rust or Cobol) or specific frameworks.

The Verdict: Ready for Production?

Is Devstral 2 ready to replace your current extension? Our tests confirm that for daily tasks—boilerplate generation, unit testing, and debugging—the Small 2 variant is shockingly fast and accurate. However, for system architecture design involving massive, multi-file context, the cloud-based Mistral Large 2 is still required. The future of coding is hybrid: local inference for speed and privacy, cloud inference for heavy lifting—and Mistral now covers both bases.

| Feature | Devstral 2 | Llama 3 | DeepSeek Coder |

|---|---|---|---|

| Context Window | 128k tokens | 128k tokens | 128k tokens |

| Architecture | MoE | Transformer | Transformer |

| On-Device Inference | Yes | No | No |

| Privacy | High (local) | Low (cloud) | Low (cloud) |

| Pass@1 Rate | High | Medium | High |

| License | Apache 2.0 | Custom | Apache 2.0 |

| Specialization | Coding | Generalist | Coding |

| Target Use Case | Local development | Chat | Cloud development |

Tags :

- Mistral AI

- Devstral

- On Device AI

- Coding Assistant