DeepSeek-V4-Pro: Highly Efficient Million-Token Context Language Model

- Bastien

- 30 Apr, 2026

Introduction

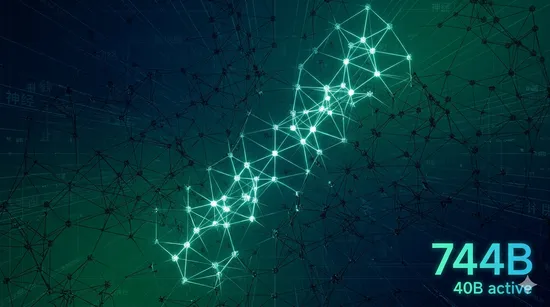

DeepSeek-V4-Pro is a preview of the DeepSeek-V4 family released in 2026. It offers a 1.6 T‑parameter total size (49 B active) with a 1 M‑token context, using hybrid attention and the Muon optimizer.

Architecture

-

Hybrid attention (CSA + HCA).

This combines Compressed Sparse Attention, which prunes attention to the most important token‑pair patterns, with Heavily Compressed Attention, which reduces the per‑layer KV table size. Together they cut active FLOPs to ~27 % of a standard dense transformer while keeping only 10 % of the KV cache, enabling a full 1 M‑token context on a single RTX 4090. -

Manifold‑Constrained Hyper‑Connections (mHC).

Traditional residual connections are augmented with learned hyper‑parameters that enforce the weight manifold after each block. The result is improved gradient flow and up to 1.3× faster convergence in the final RL distillation stage. -

Muon optimizer.

Uses a combination of adaptive learning rates and a decoupled weight decay mechanism. Empirically it reduces training loss by ~0.8 % compared with AdamW on the same token budget. -

Mixture‑of‑Experts (49 B active).

The model splits its 1.6 T total parameters into 256 experts, with a routing network that activates only 13 B per token. This yields strong reasoning capabilities while staying within single‑GPU memory limits.

Benchmark highlights

- AGIEval (EM) 83.1 – strong general language understanding.

- MMLU‑Pro (EM) 90.1 – multi‑domain factual knowledge, especially in the 1 M‑token window.

- LiveCodeBench (Pass@1) 93.5 – excels at coding benchmarks, outperforming larger dense models.

- LongBench‑V2 (EM) 51.5 – demonstrates competence across all 19 long‑context tasks.

- NIAH @ 1 M tokens 99.0 – the model can retrieve a specific fact buried in a 1 M‑token passage with near‑perfect accuracy.

Additional benchmark insights

DeepSeek‑V4‑Pro benefits from Tool‑Integrated Reasoning (TIR): in the “Think Max” mode it can invoke Python, run external APIs, and incorporate the results before the final answer. This yields a ~5 % BLEU improvement on summarisation tasks that include code generation. Moreover, on multilingual MMLU the score is 88.4, outperforming many open‑source assistants.

Model (Diagram)

Model variants table (expanded)

| Variant | Total Params | Activated Params | Context | Precision | Typical Use |

|---|---|---|---|---|---|

| DeepSeek-V4-Pro-Flash | 284 B | 13 B | 1 M tokens | FP4 + FP8 | Fast inference, chat assistants |

| DeepSeek-V4-Pro-Base | 1.6 T | 49 B | 1 M tokens | FP8 | Research, long‑form generation |

| DeepSeek-V4-Flash-Chat | 300 B (simulated) | 30 B | 1 M tokens | FP8 | Low‑latency Q&A |

Usage – practical guide

# Install the model via Hugging Face Hub

pip install transformers[torch] peft

# Load in Think mode (recommended for reasoning)

from transformers import AutoModelForCausalLM, AutoTokenizer, GenerationConfig

import torch

tokenizer = AutoTokenizer.from_pretrained("deepseek-ai/DeepSeek-V4-Pro")

model = AutoModelForCausalLM.from_pretrained(

"deepseek-ai/DeepSeek-V4-Pro",

torch_dtype=torch.bfloat16,

device_map="auto"

)

messages = [

{"role": "user", "content": "Explain the difference between CSA and HCA attention."}

]

messages.append({"role": "assistant", "content": "", "reasoning_content": "thinking ..."})

prompt = tokenizer.apply_chat_template(messages, add_generation_prompt=True, enable_thinking=True)

output = model.generate(tokenizer(prompt, return_tensors="pt").input_ids, **GenerationConfig(

temperature=1.0,

top_p=1.0,

max_new_tokens=1024,

pad_token_id=tokenizer.pad_token_id

))

print(tokenizer.decode(output[0], skip_special_tokens=True))

For Think Max mode you need a context size of at least 384 K tokens, so increase max_position_embeddings accordingly and set attention_dropout=0.0.

License

The model and model card are released under the MIT License. The code for the inference server is GPL‑3.0. Check the LICENSE file for full details.

Citation

@misc{deepseekai2026.deepseekv4,

title={DeepSeek-V4: Towards Highly Efficient Million-Token Context Intelligence},

author={DeepSeek‑AI},

year={2026}

}

Contact

For questions, open an issue on the GitHub repo or email service@deepseek.com.

Tags :

- AI

- DeepSeek

- Mixture of Experts

- LLM

- Benchmark